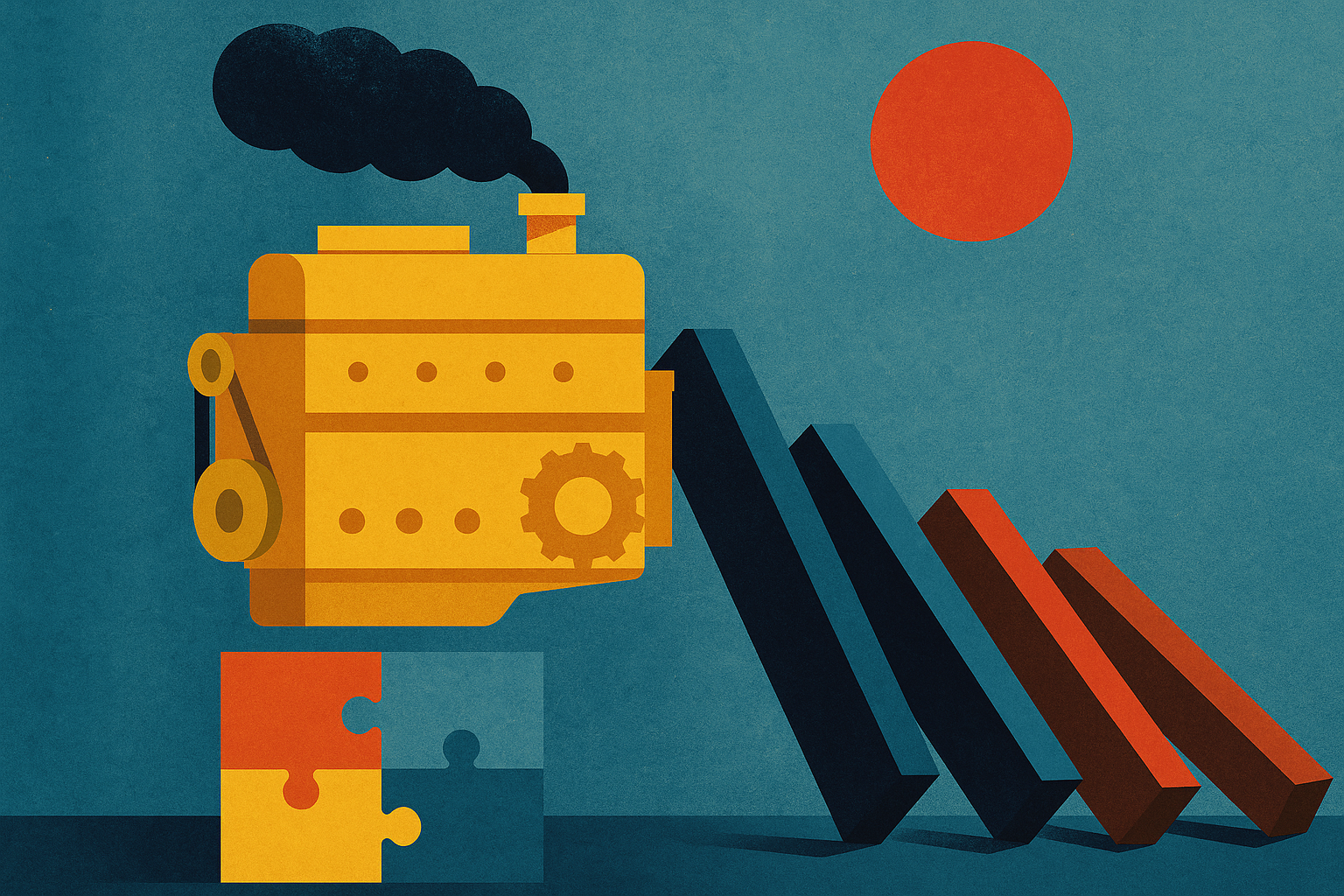

Your ERP Is an Engine of Consequences

The question is why so many ERP projects stall even after the integrations “work.” In most cases, the screens load, the API calls succeed, and transactions appear where they should. Yet finance still spends nights reconciling, operations still distrusts the numbers, and every change request turns into a fragile mapping exercise.

What’s at stake isn’t just clean data. It’s whether the organization treats the ERP as a passive container of truth or as the place where business events become binding outcomes. From first principles, an ERP is not a library. It’s a machine. Once an event enters the system, it triggers accounting logic, tax logic, approvals, posting rules, period controls, and downstream reporting. Those consequences are often automatic and difficult to unwind.

When teams treat NetSuite, SAP, or any ERP as a destination for pre-calculated numbers, they bypass the very engine designed to produce those numbers. The result doesn’t look like a system design failure. It looks like a data entry problem: “invalid tax code,” “transaction not in balance,” “posting period closed.” But the root cause is architectural: cooked meals being pushed into a system built to cook.

Truth vs. consequences: what an ERP actually does

“Single source of truth” implies storage. ERPs do store, but their defining role is transformation: converting raw facts into structured, auditable financial records. That transformation depends on context the ERP owns and enforces:

- Master data context: vendor/customer attributes, subsidiaries, locations, items, tax registrations, payment terms.

- Rules and derivations: tax determination, revenue recognition, intercompany logic, multi-book accounting, accrual rules.

- Controls: approvals, period close, segregation of duties, audit trails.

- Posting consequences: GL impact, subledger balances, aging, cash forecasts, compliance reporting.

If upstream systems deliver “final answers” (tax totals, allocation lines, balancing entries) without the inputs and triggers that let the ERP derive them, the ERP can only store the result. It cannot validate intent, explain how the value was derived, or reliably reproduce it when something changes (tax rules, nexus, subsidiary structure, item classification, reporting requirements).

Why “pre-calculated data” creates downstream chaos

When a team calculates outcomes upstream, they implicitly encode rules in spreadsheets, AP tools, or custom apps. Those rules are rarely versioned, tested, or governed like the ERP configuration. Over time, they drift from the ERP’s logic. That drift shows up in predictable failure modes:

- Mismatch between totals and derived components: the header total matches, but tax lines or allocations don’t reconcile with what the ERP expects.

- Context-free journal entries: the ERP receives debits and credits, but lacks the transaction type context required for tax, reporting, or audit classification.

- Irreversible posting effects: an entry “works” but triggers incorrect recognition, incorrect tax filings, or incorrect intercompany elimination because the underlying drivers weren’t present.

- Operational fragility: the integration becomes a set of brittle mappings. Small master data changes break large volumes of transactions.

This is why teams can spend weeks “fixing mappings” and still feel stuck. The ERP is not rejecting the data because it’s pedantic. It’s rejecting it because the transaction structure doesn’t carry the meaning needed to run the ERP’s own logic.

Feed raw ingredients, not a cooked meal

The principle is simple: pass elemental facts and let the system of consequence derive the rest. In practice, this means integrations should transmit:

- Who: vendor/customer, employee, counterparty, related parties.

- What: item/service category, expense type, contract reference.

- Where: subsidiary, location, ship-to/bill-to, tax nexus indicators.

- When: transaction date, service period, fulfillment date.

- How much: base amounts, quantities, currency.

- Triggers: flags that decide which ERP logic path to use (goods vs. services, intercompany vs. third-party, capitalizable vs. expense).

What should not be pushed upstream as “final truth” are values the ERP is designed to compute: tax amounts, realized FX, system allocations, posting classifications that depend on ERP-only context, or balancing lines created to force acceptance.

Example: AP and reverse-charge VAT

Consider an invoice captured in an upstream AP tool (Beanworks, Tipalti, or similar). It’s from a Spanish vendor for consulting services billed to a French subsidiary. A common approach is for the AP clerk (or the upstream configuration) to calculate reverse-charge VAT, add tax lines, and send a fully formed journal entry to NetSuite.

This often fails in two ways. NetSuite may reject it because the tax lines don’t match its expectations for that vendor, subsidiary, and tax item configuration. Or it may accept it but classify it incorrectly for tax reporting because the transaction lacks the internal triggers that tie the tax to the correct reporting boxes.

A system-native design is different. The upstream tool captures the facts:

- Vendor (Spanish entity)

- Subsidiary (France)

- Date and service period

- Base amount and currency

- Category (services) and location/context fields

That data is passed into NetSuite as a vendor bill (or appropriate transaction type), not as a pre-balanced journal. NetSuite’s tax engine then determines reverse-charge treatment based on nexus, vendor attributes, and configured tax rules. The AP clerk verifies the outcome rather than inventing it. The audit trail lives where the consequence occurs.

Designing integrations as orchestration of logic

Integration is usually described as “moving data from Point A to Point B.” That description leads to the bucket mistake. A better framing is orchestration: each system does the work it is best suited to do.

- Capture systems are good at intake (OCR, vendor portals, structured forms) and collecting supporting documents.

- Workflow systems are good at approvals, routing, and enforcing who can attest to what.

- ERPs are good at controlled transformation: turning business events into postings, compliance outputs, and standardized reporting structures.

When you design around these strengths, you also get clearer ownership. The upstream tool owns capture quality and completeness. The ERP owns accounting and tax consequences. Finance owns the configuration and controls that govern those consequences.

A practical method: “minimum viable event” payloads

One useful method is to define a minimum viable event for each transaction class (AP invoice, customer invoice, expense report, inventory receipt). The payload includes only what the ERP needs to derive the rest reliably. A simple checklist for each payload:

- Derivation coverage: does the ERP have enough context to compute tax, GL impact, and reporting classification?

- Reproducibility: if rules change later, can the ERP recompute or explain the outcome from stored drivers?

- Auditability: does the ERP record the source document and the decision path (who approved, which configuration applied)?

- Exception design: when the ERP cannot derive something, does it fail fast with a clear queue for correction?

This shifts effort from “mapping every line” to “designing the event.” The integration becomes more stable because it carries intent, not just numbers.

Operating model: verification instead of manual construction

This approach changes roles. Teams stop spending time constructing entries that satisfy the ERP and start verifying what the ERP derives. Verification is a different skill set: reviewing exceptions, checking configuration, and improving master data. It’s also more scalable, because it concentrates expertise where it belongs—inside the system that produces the consequence.

It also reduces silent risk. If an upstream spreadsheet calculates tax, there is rarely a reliable way to prove which version of the logic was used for a given invoice three months later. If NetSuite’s tax engine calculates it, the configuration and audit trail exist alongside the posting.

Ultimately, the shift is conceptual: stop calling the ERP the source of truth as if it were a static repository. Treat it as the engine of consequences. What this means in practice is designing integrations around raw business events and letting the ERP apply controlled logic to produce outcomes you can explain, reproduce, and audit.

The takeaway is straightforward: don’t make your ERP smarter. Get smarter about what you ask it to do, and ensure every upstream system feeds it the ingredients it needs to run its own rules.